Introduction

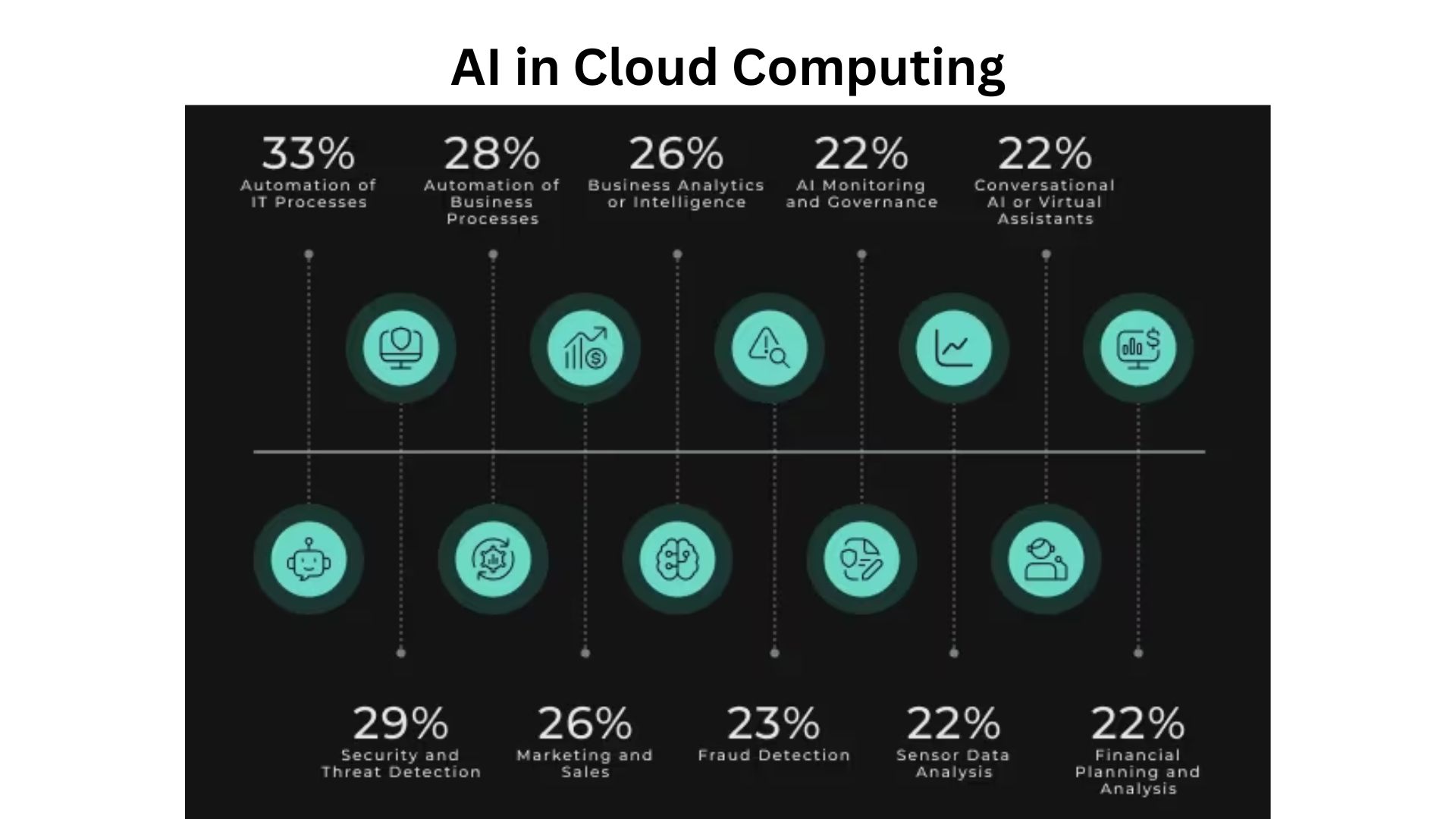

AI cloud computing statistics: AI cloud computing is the mix of two ideas: the cloud, which gives you endless storage and computing online, and artificial intelligence. Put them together, and you get a system where anyone, from small startups to large enterprises, can run smart AI tools without purchasing costly hardware. What makes it exciting is the scale: you can train models, analyze data, or deploy AI apps in minutes, all through the cloud.

In this article, I’d like to talk more about AI cloud computing statistics that show how fast it’s growing, how much is being spent, and why it’s becoming the backbone of modern technology. Let’s get started.

Editor’s Choice

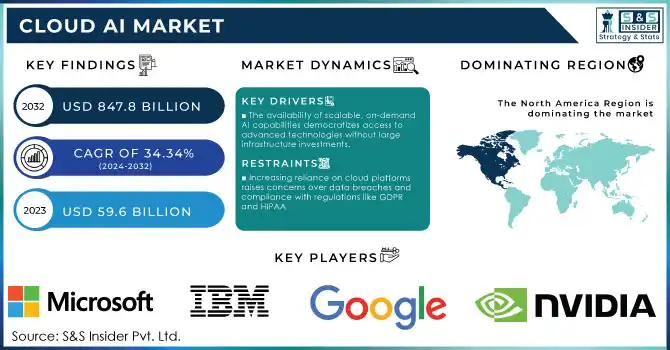

- The global AI cloud computing market is expected to cross US$650 billion by 2030, growing at a CAGR of over 35%.

- Around 70% of enterprises already rely on AI cloud services for scaling their business and reducing infrastructure costs.

- North America leads adoption, but Asia-Pacific is catching up fast with the highest growth rate.

- By 2026, nearly 75% of organizations are predicted to shift at least one AI workload to the cloud.

- AI cloud platforms like AWS, Microsoft Azure, and Google Cloud dominate with over 65% of market share.

- Healthcare, finance, and retail are among the industries investing the most in AI cloud services.

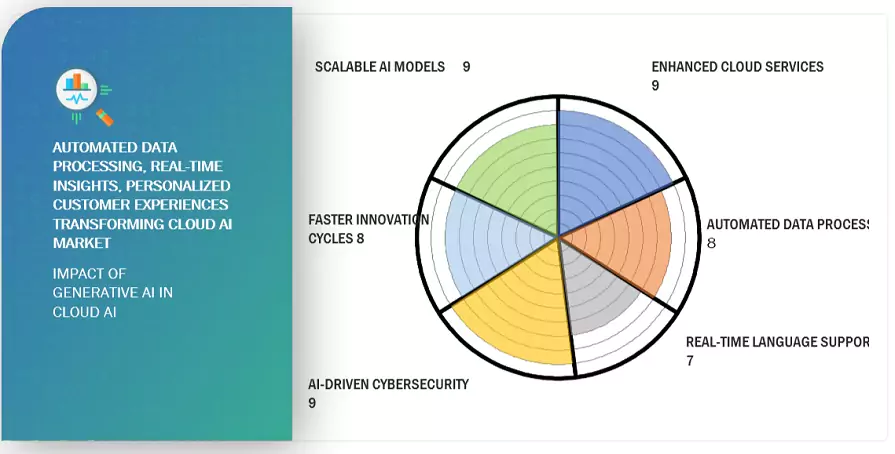

- Generative AI demand has skyrocketed, with 40% of companies already experimenting with AI on cloud platforms.

- Cloud AI saves companies an average of 30 to 40% in operational costs compared to on-premises AI setups.

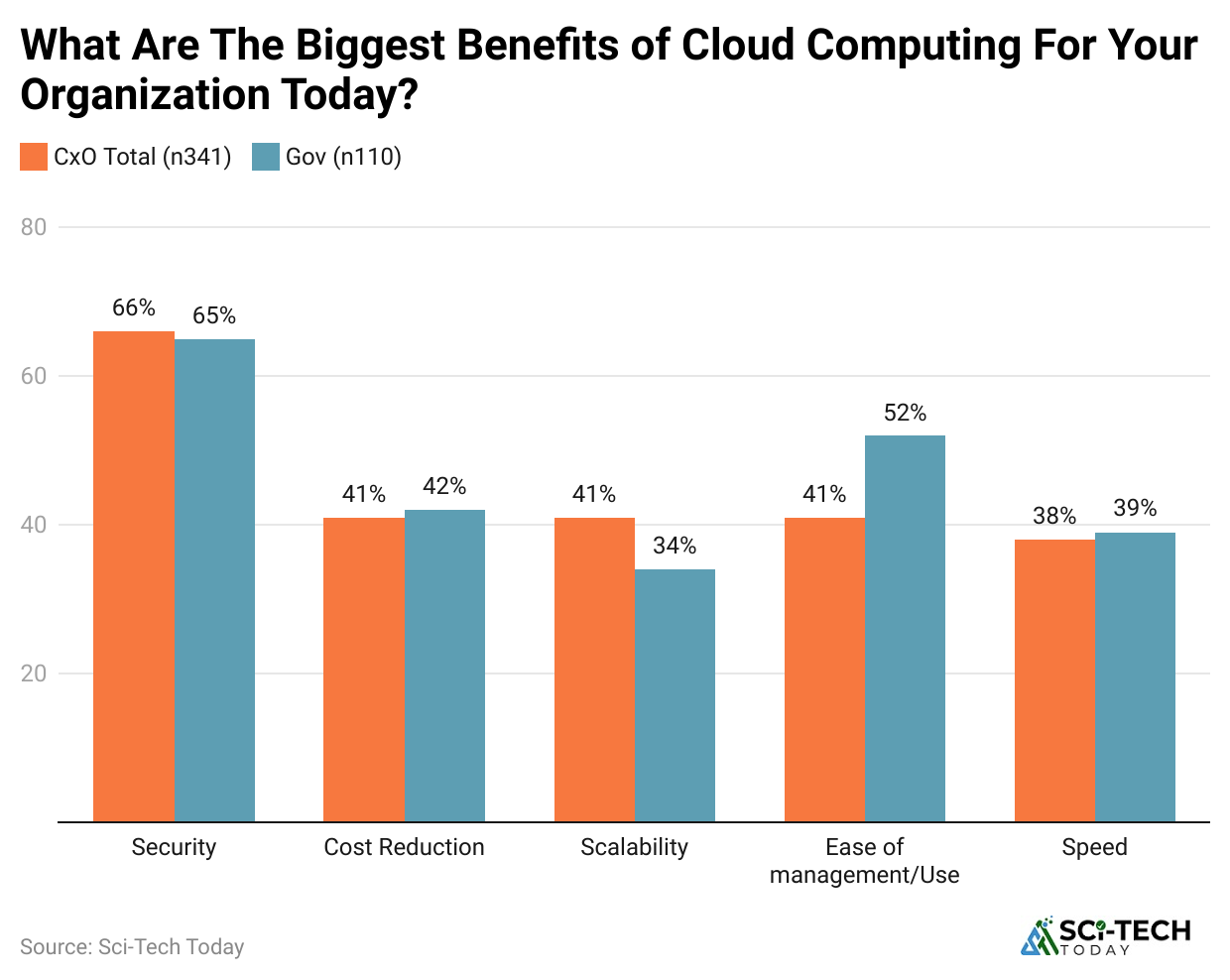

- Data security and compliance remain the top concerns, with nearly 58% of businesses citing it as a challenge.

| Category | Key Statistics |

| Market Size |

$650 billion projected by 2030, 35% CAGR |

|

Enterprise Adoption |

70% of enterprises are already using AI cloud services |

| Regional Growth |

North America leads, Asia-Pacific fastest growth |

|

Workload Migration |

75% of organizations will move AI workloads to the cloud by 2026 |

| Market Leaders |

AWS, Azure, and Google Cloud hold 65% combined share |

|

Industry Usage |

Strongest in healthcare, finance, and retail |

| Generative AI on Cloud |

40% of companies are already experimenting with it |

|

Cost Savings |

30 to 40% average reduction in infrastructure and operations costs |

| Security Challenges |

58% of businesses see data security and compliance as the main barrier |

Where Did This All Start?

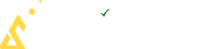

(Source: scoop.market.us)

(Source: scoop.market.us)

- In the 2012 to 2016 window, cloud-first ML moved from hobby to production, thanks to cheap object storage, elastic GPU instances, and better frameworks.

- Early public cloud GPUs were scarce, but they made training time drop from weeks to days for teams without on-prem clusters.

- From 2017 to 2020, the model size curve bent upward. Transformer models computed the kingmaker and pulled workloads into clouds that could deliver clusters on demand.

- Most teams couldn’t get 100 GPUs on-prem, but they could get them in the cloud with a signed agreement.

| Era | What changed | Why clouds mattered |

| 2012 to 2016 | GPUs rentable, S3-like storage, better frameworks | Access over ownership, quick spin-up |

| 2017 to 2020 | Transformers, bigger datasets, and MLOps appear | Elasticity for experiments and failures |

| 2021 to 2023 | Foundation models, first big genAI apps | Burst capacity and global coverage |

| 2024 to 2025 | Full-on genAI wave, inference dominates | Dedicated AI infra, multicloud, new “AI clouds.” |

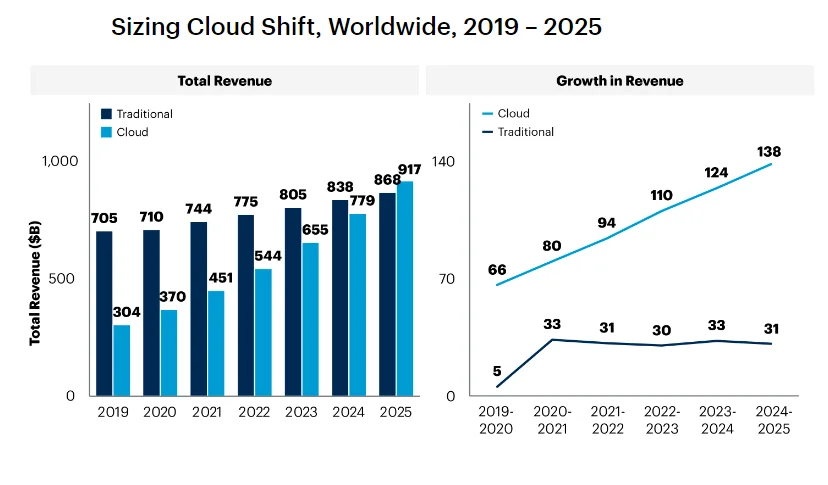

Market Size, Spend, and Growth

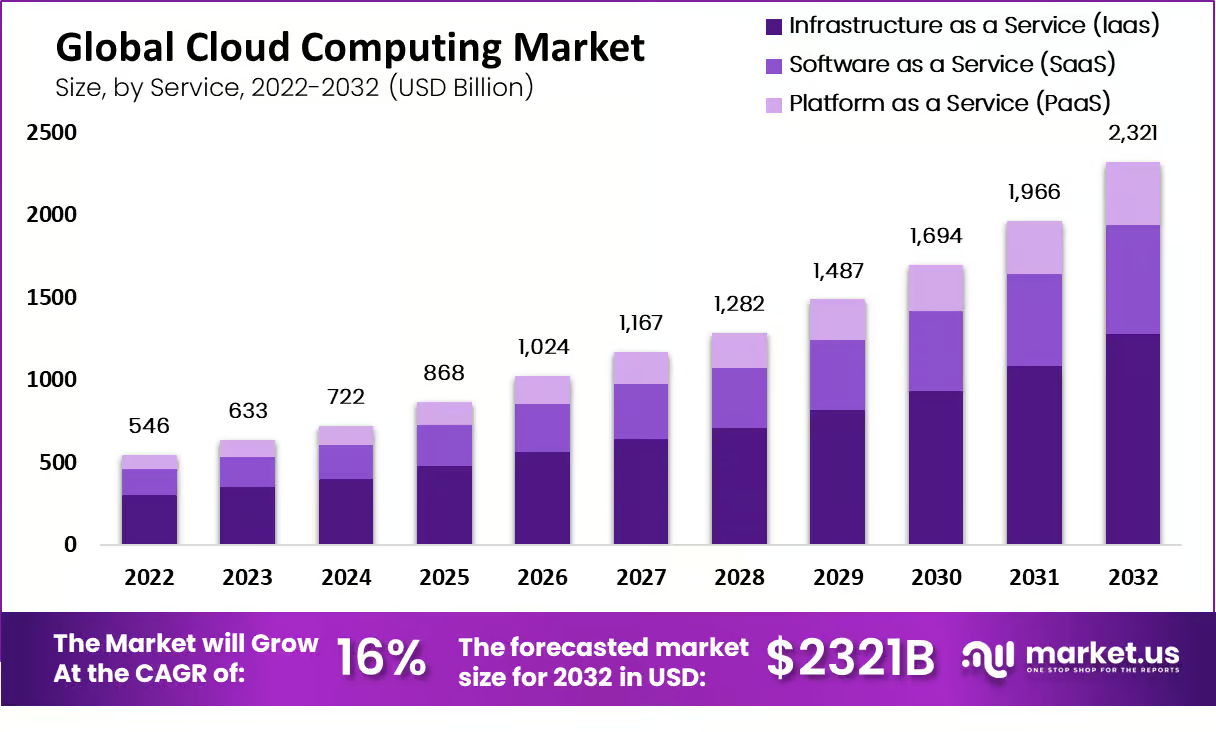

(Reference: precedenceresearch.com)

(Reference: precedenceresearch.com)

- Cloud infrastructure spend is at an all-time high. Enterprise spending on cloud infrastructure services hit about $99 billion in Q2 2025, up roughly $20 billion year over year.

- AI demand is the driver behind that delta, with training and inference both lifting usage hours and reserved capacity.

- Provider shares are shifting. In Q2 2025, AWS held roughly 30% of cloud infrastructure services, with Microsoft and Google gaining momentum as AI deals stack up.

- The absolute market grew fast enough that everyone’s revenue rose, but share tells you where AI workloads are landing.

- Hyperscaler revenue base keeps growing. Across hyperscale operators, digital services grew about 23% in 2024, outpacing other segments.

| Metric | Latest figure | Why it matters |

| Cloud infra spend, Q2 2025 | $99B quarter | The scale of AI capacity is embedded here. |

| AWS share, Q2 2025 | 30% | Still the largest footprint for AI workloads by share. |

| Digital services growth 2024 | 23% YoY | Cloud AI is pulling the train. |

Enterprise AI Adoption and Where Cloud Fits

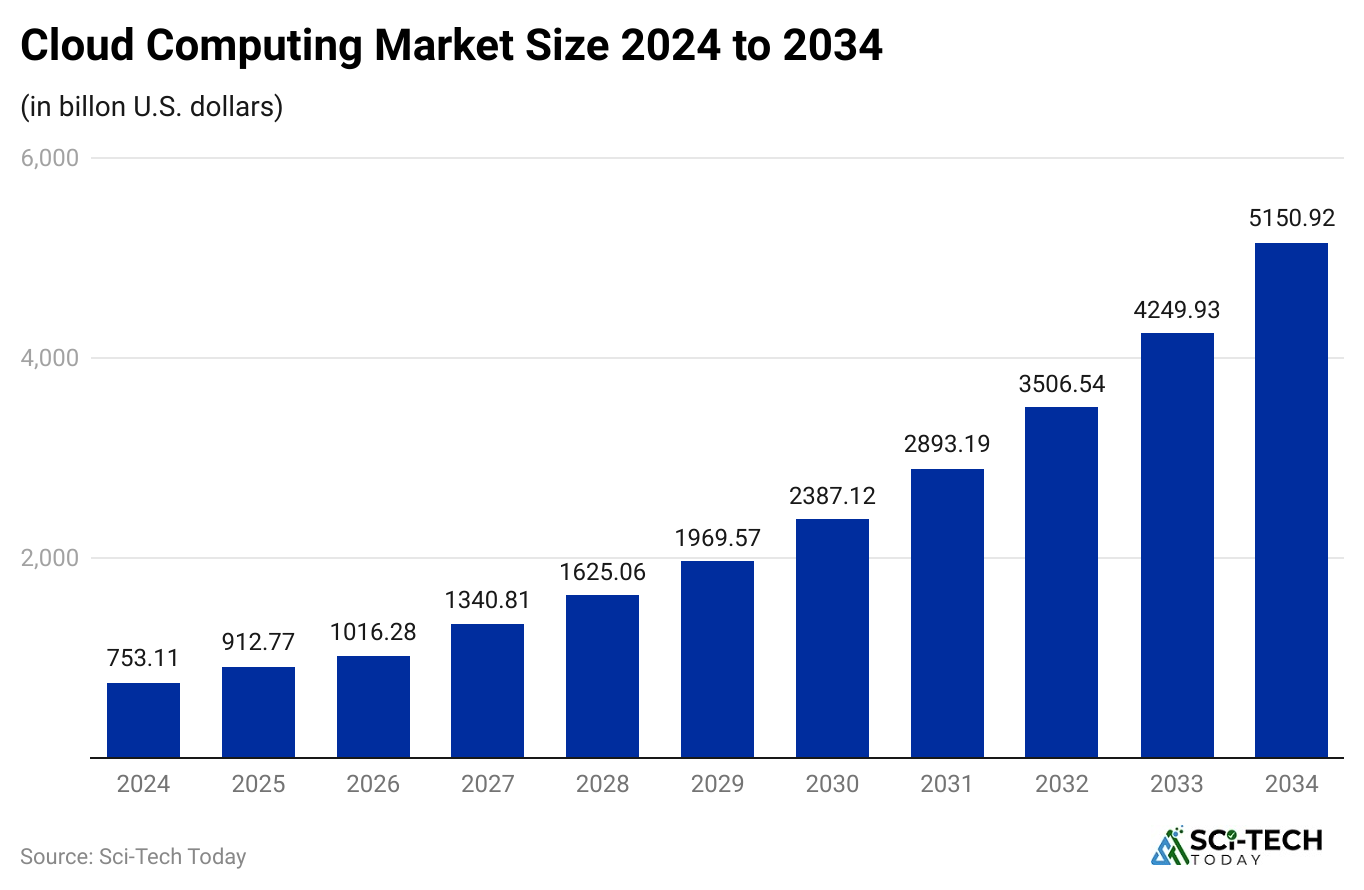

(latentai.com)

(latentai.com)

- AI is mainstream in enterprises. In McKinsey’s latest global pulse, about 78% of organizations used AI in at least one function in 2024, and the use of generative AI reached roughly 71% by late 2024.

- Functions using AI have expanded. Companies report using AI across more functions than a year earlier, with an average of around three functions per company, and the heaviest use in IT, sales, and marketing.

| Signal | Data point | Read |

| Any AI in orgs | 78% | Past the stage. |

| GenAI in orgs | 71% | Direct lift to inference hours. |

| Functions per org | 3 on avg | From pilots to platform usage. |

The Cost Side That Steers Design Decisions

(Source: marketsandmarkets.com)

(Source: marketsandmarkets.com)

- Frontier model training costs got real. Stanford’s AI Index estimated GPT-4 training compute at roughly $78 million and Gemini Ultra near $191 million.

- Even if you debate the exact bills, the order of magnitude sets the bar for cluster scale and the value of long-term cloud contracts.

- Azure’s AI pull-through is measurable. Microsoft disclosed that 16 points of Azure growth in a recent quarter came from AI services.

- That’s not a soft metric. It captures managed model APIs, GPUs, and AI tuning workloads that keep clusters hot.

- Google Cloud’s AI backlog is stacking up. In Q2 2025, Google Cloud revenue grew32% to $13.6B, and commentary highlighted growth in AI Infrastructure and Generative AI Solutions.

| Topic | Data | Meaning |

| Frontier train cost | $78M GPT-4,$191M Gemini Ultra | You architect for cluster efficiency and reuse. |

| Azure AI growth impact | 16 points of Azure growth | AI is now a primary revenue driver. |

| Google Cloud Q2 2025 | $13.6B, 32% YoY | AI infra and genAI solutions lead. |

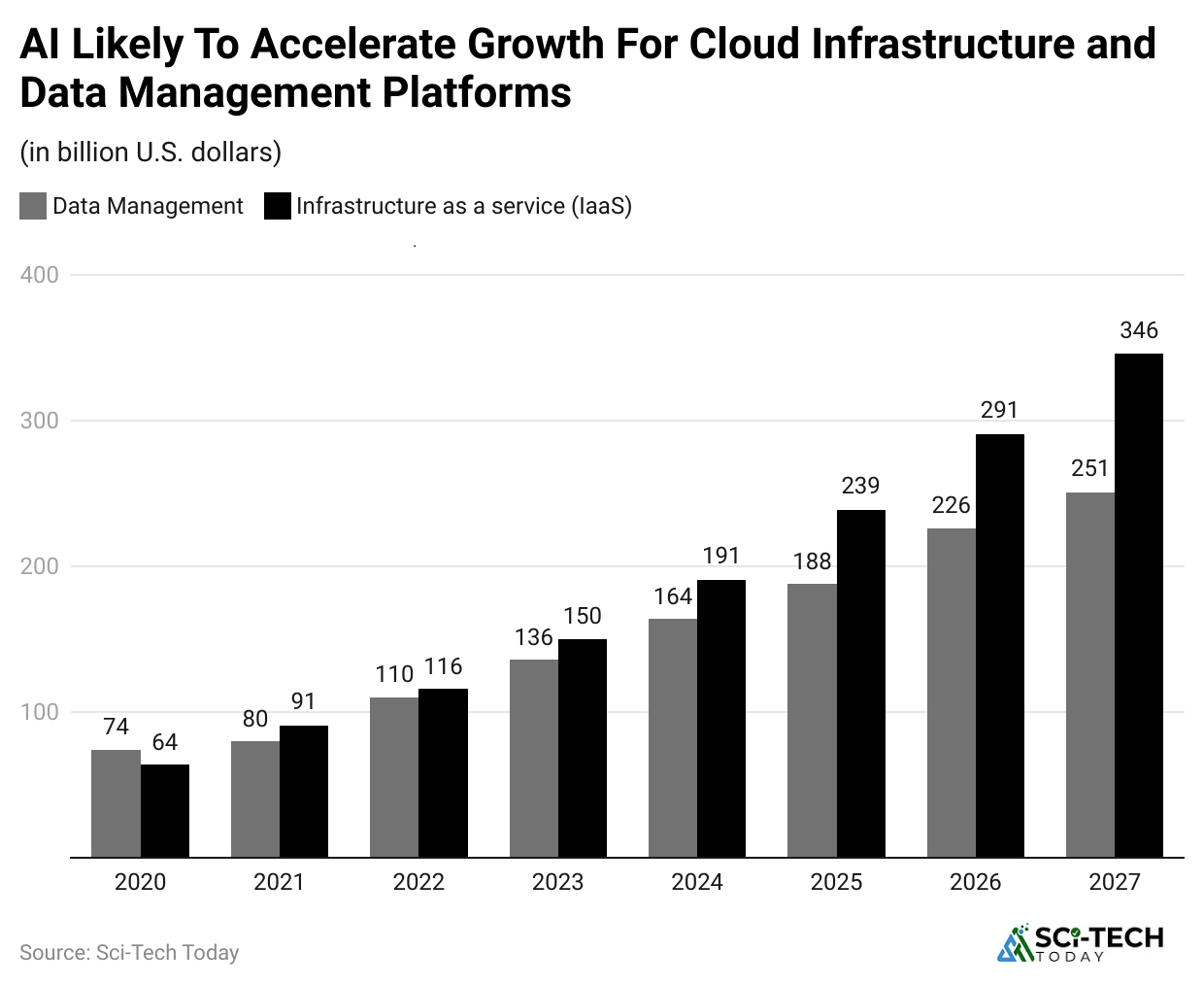

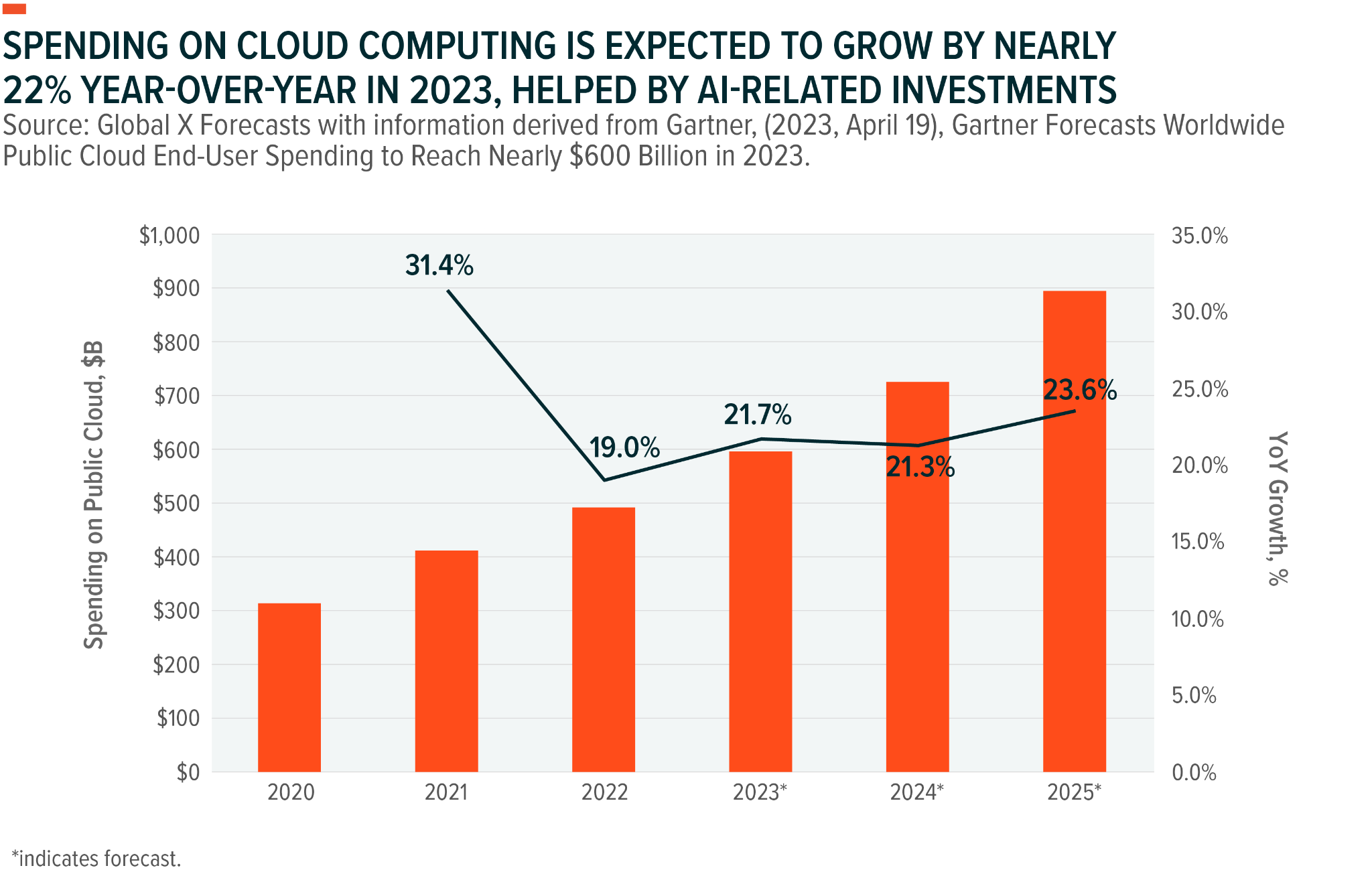

Spending Forecasts

(Reference: cloudzero.com)

(Reference: cloudzero.com)

- Total AI solutions spend is projected to be around $307B in 2025, tracking toward $632B by 2028.

- This bundles software, services, and infrastructure tied to AI strategies. It’s the pipeline that feeds cloud GPU hours and MLOps tooling.

- AI infrastructure alone is forecast to hit $223B by 2028, with about 82% of that in cloud environments.

- GenAI spend inside that total is set to rise fast. IDC notes genAI pushing toward $202B by 2028.

| Bucket | 2025 | 2028 | Note |

| AI solutions total | $307B | $632B | Software services infra. |

| AI infrastructure | $223B | 82% cloud-deployed. | |

| GenAI slice | $202B | A third of total AI by 2028. |

Provider Dynamics and Big AI deals

(Source: snsinsider.com)

(Source: snsinsider.com)

- Meta signed a multiyear, $10B cloud deal with Google in 2025 to cover AI capacity. That’s notable because Meta has huge in-house data centers, yet still needs external AI cloud lanes for burst and regional coverage.

- Microsoft’s Azure disclosed momentum. The company reported Azure plus other cloud services revenue up 33% with substantial AI contribution, and Microsoft Cloud quarterly revenue of $76.4B in Q2 2025. Scale like this underwrites the GPU buildouts.

- Google Cloud keeps stacking backlog. A $106B backlog and a faster pace of mega deals suggest AI platform commitments that convert to multi-year consumption.

| Company | Headline 2025 | So what |

| Meta Google Cloud | $10B over 6 years | Even the largest self-hosters rent big AI capacity. |

| Microsoft Azure | 33% growth, AI-heavy | AI is the lever on top of the core cloud. |

| Google Cloud | $13.6B Q2 revenue, backlog $106B | Multi-year AI commitments are real. |

Compute Supply and The GPU Economy.

(Source: market.us)

(Source: market.us)

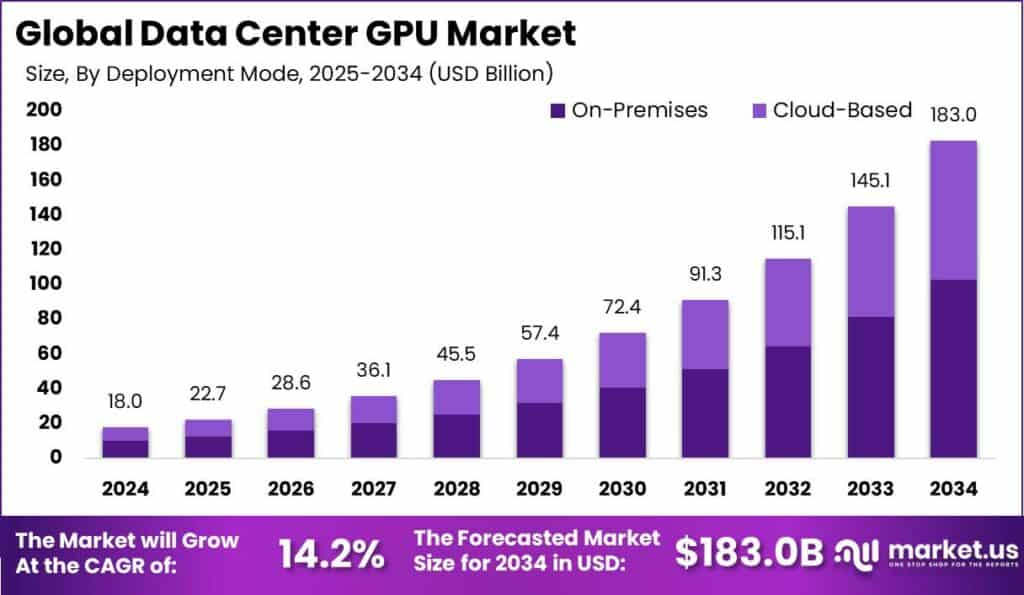

- NVIDIA data center revenue hit $39.1B in one quarter (Q1 FY26, Apr 2025), up 73% year over year.

- AI-specialist clouds are scaling. CoreWeave disclosed plans to invest $20B to $23B in 2025 to expand AI infrastructure, and later announced a deal that includes 840 MW of existing power to support HPC contracts.

- Hyperscaler capex is huge. Analysts expect the big four to spend hundreds of billions on AI infrastructure from 2024 to 2026, with 2025 as a peak year.

| Signal | Data point | Meaning |

| NVIDIA DC revenue | $39.1B Q1 FY26 | Accelerator demand is still rising. |

| CoreWeave 2025 capex | $20 to 23B | Dedicated AI cloud capacity wave. |

| Power under contract | 840 MW | Power is the bottleneck as much as GPUs. |

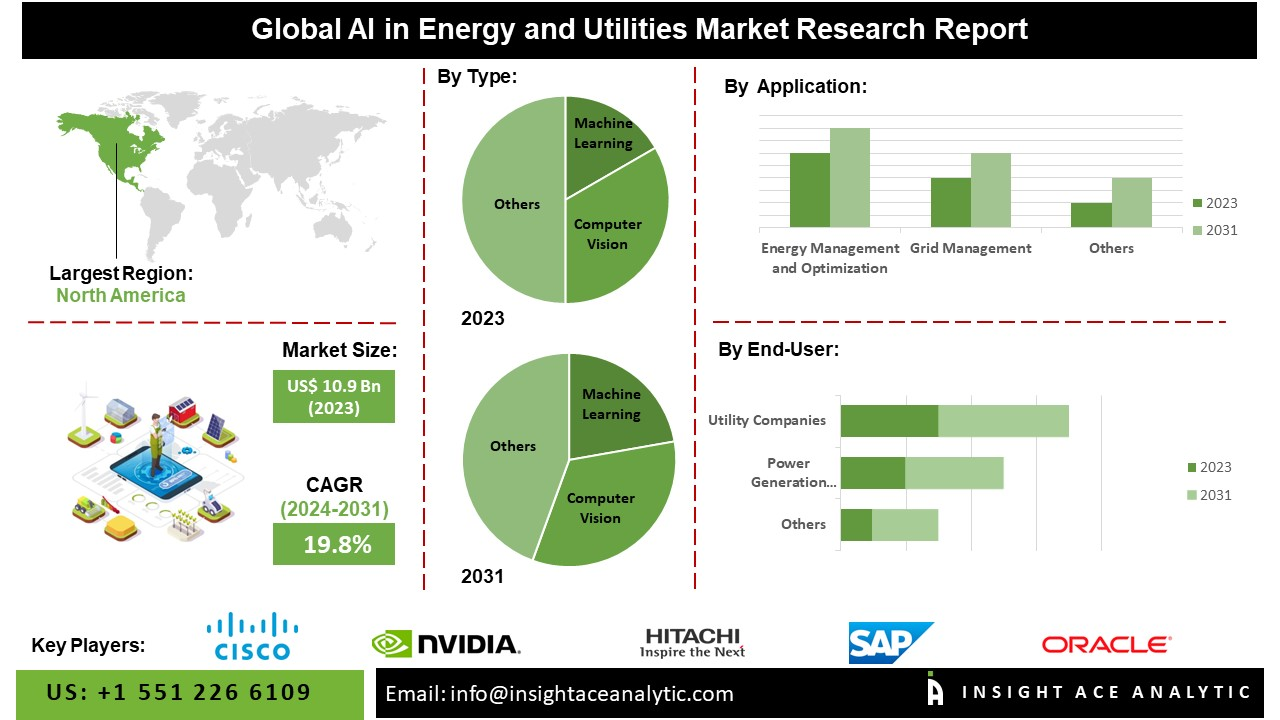

Power, Energy, and The New Constraint

(Source: insightaceanalytic.com)

(Source: insightaceanalytic.com)

- Data center electricity consumption could roughly double by 2030 to around 945 TWh, with AI as the biggest driver.

- US share trends are steep. Multiple analyses suggest that US data centers at 3.5 to 4% of electricity today could climb to 8 to 12% by 2030, depending on buildout and efficiency.

- Vendors are chasing efficiency. Reports of order-of-magnitude improvements in energy per request are encouraging, but total demand keeps rising because usage grows faster than efficiency.

| Topic | Number | Takeaway |

| Global DC electricity 2030 | 945 TWh | AI is the main growth driver. |

| US grid share 2030 | 8 to 12% | Planning and sitting now is strategic. |

| Efficiency trend | Big per-request drops | Total demand still rises. |

Where Workloads Live and How They’re Split

(Reference: cloudzero.com)

(Reference: cloudzero.com)

- Training favors fewer, larger regions with the most power and the newest accelerators. You see transient multicloud strategies for availability and price, but gravity settles where the biggest clusters live.

- Inference is everywhere. When a product launches, most costs shift to inference. That pushes you to mix rented cloud GPUs, managed endpoints, and lighter inference when latency or cost demands it.

- Spend is climbing, with computing near $261B in 2025 and accelerating toward $380B by 2028.

| Workload | Typical home | Why |

| Pretraining | Mega regions in big clouds | Power, cluster size, interconnect |

| Finetune | Cloud regions with data gravity | Proximity to source data |

| Inference | Mix of clouds | Latency, cost, privacy |

| spend | $261B in 2025 to $380B in 2028 | Pushdown for latency and savings. |

Pricing, Unit Economics, and What Most Teams Actually Pay

(Source: cloudzero.com)

(Source: cloudzero.com)

- Training economics hinges on utilization. At list prices, a months-long run can look scary, but real contracts tie down reserved capacity and spot blocks.

- The step change comes from higher-density nodes and better parallel efficiency, not coupon codes.

- Inference costs scale with users. Model choice, context length, and caching policies change the total bill more than a 5% discount on GPUs.

- Teams that move from general models to distilled or task-specific models usually cut variable cost by 2 to 5x without killing quality.

| Lever | What changes the bill | Impact |

| Parallel efficiency | Better kernels, schedulers | Fewer idle GPUs, faster time-to-train |

| Distillation | Smaller student models | 2 to 5x cheaper inference at similar quality |

| Context policy | Window size, caching | Request cost often halves with smart caching |

| Placement | Right region, right chip | Price-to-performance wins over raw price |

Data Infrastructure and Pipelines

(Reference: globalxetfs.com)

(Reference: globalxetfs.com)

- AI without strong data plumbing fails. 2025 has seen a wave of data-infrastructure M&A because the bottleneck isn’t only GPUs, it’s clean, labeled, governed data that won’t break production. Tech M&A in 2025 tilted toward data foundations as AI scaled.

- Feature stores, vector DBs, and governance became table stakes. The result is more spending on cloud storage tiers, streaming, and cataloging. It’s not glamorous, but it’s where a lot of AI Cloud Computing Statistics actually show up on the bill.

| Layer | Why it matters | Typical cloud impact |

| Ingest streaming | Fresh data into models | Egress fees, streaming compute |

| Feature store vector DB | Reuse and retrieval | Hot storage, bursty CPU/GPU |

| Governance | Audits and lineage | Catalog services, policy engines |

| Labeling eval | Better ground truth | Storage, structured pipelines |

Benchmarks That Actually Move The Budget

(Reference: cloudzero.com)

(Reference: cloudzero.com)

- Latency to the first token is the most honest inference KPI. If your P95 slips, you will overprovision or lose users. Teams buy capacity to protect tail latency and choose regions that keep hops short.

- Cost per successful task beats raw tokens. Leaders calculate cost per solved ticket or per qualified lead, not per million tokens alone.

| KPI | What it captures | Why finance cares |

| P95 latency | Real user experience | Drives capacity decisions |

| Cost per outcome | True unit economics | Compares models and clouds |

| Uptime under load | Reliability at peak | SLA penalties, churn risk |

| Drift rate | Model aging | Signals retraining spend |

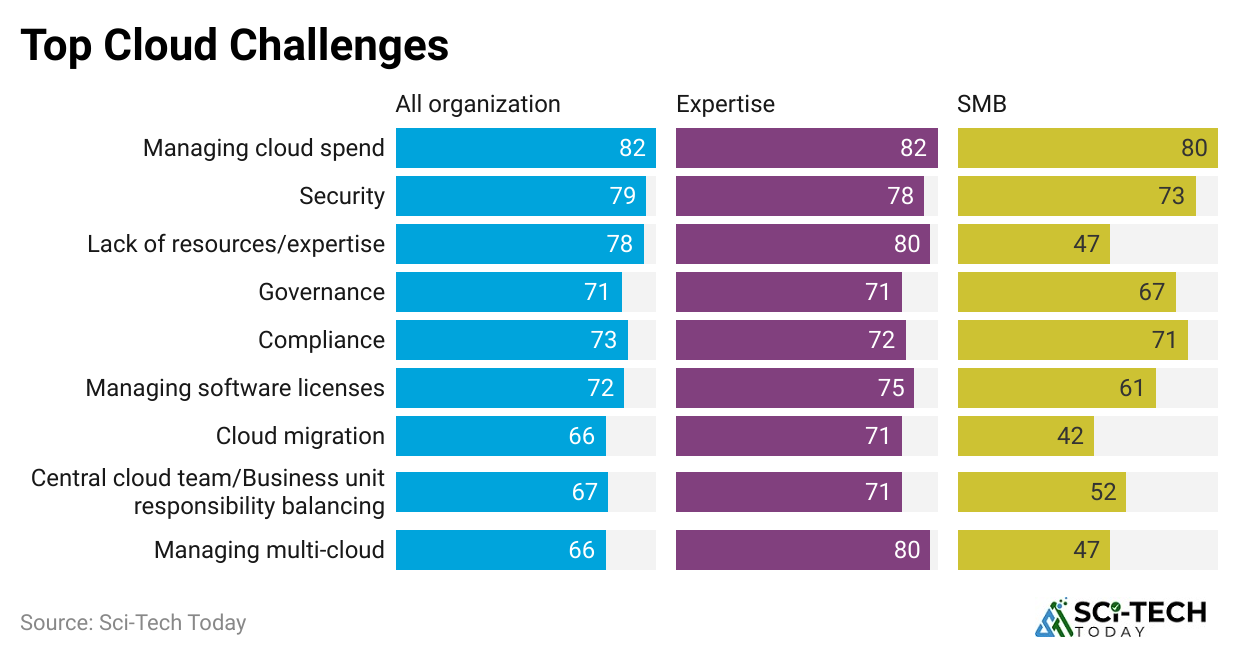

Security, Compliance, and The AI Surface Area

(Source: sapient.pro)

(Source: sapient.pro)

- AI expands the attack surface. More endpoints, more secrets, more data paths. Most enterprises now funnel inference through managed gateways to enforce data retention and PII scrubbing policies.

- Regulatory load is rising. 2024 saw a jump in AI-related rules and guidance. The upshot in the cloud is regional controls, model risk assessments, and more auditable logs.

| Control | Purpose | Cloud effect |

| Prompt/response filters | Stop data leakage | Gateway services in front of models |

| Key management | Secrets safety | HSM and KMS usage growth |

| Data residency | Meet local rules | Region pinning, multicloud |

| Audit logging | Investigations | Hot storage and SIEM costs |

Team Structure, Skills, and The Human Side

(Source: mdpi.com)

(Source: mdpi.com)

- Teams are smaller than you think. A lean pod can ship meaningful AI features with a foundation model, a strong data engineer, and a pragmatic product owner.

- MLOps maturity separates the winners. The teams with reproducible pipelines, eval harnesses, and clear rollback plans spend less on mistakes.

| Role | What they own | Why does it save money |

| Data platform | Reliable pipelines | Less rework, fewer outages |

| ML engineer | Training tuning | Fit the model to the problem |

| App engineer | Integration UX | Turns tokens into value |

| FinOps | Unit economics | Keeps spending tied to ROI |

What To Expect Next?

(Source: globalxetfs.com)

(Source: globalxetfs.com)

- More multicloud, but pragmatic. Big AI buyers will keep at least two lanes for training and three for inference, but they won’t stretch themselves thin.

- The deciding factor is still where the best accelerators and power are available in a given quarter.

- AI backlog converts to revenue. Cloud backlogs driven by AI will convert steadily as GPUs land and interconnects come online.

- Expect double-digit cloud revenue growth lines as queued workloads go live through 2026.

- Power is a first-order constraint. Siting, private generation, and novel cooling are now part of AI roadmaps.

- Efficient models help, sure, but the grid math doesn’t lie. Plan for latency-awareness to take real traffic where it makes sense.

| Theme | Practical prediction | Why |

| Multicloud AI | Two training lanes, three inference | Price, availability, latency |

| Backlog burn | Steady 2025 to 2026 conversion | Capacity shipments are catching up |

| Power constraint | Energy becomes a roadmap item | Grid limits are hard bounds |

Quick Buyer’s Checklist You Can Actually Use

(Source: solulab.com)

(Source: solulab.com)

- Pick the metric that matters. Choose cost per solved task, not cost per million tokens alone. Then tie autoscaling to that metric to stop surprise bills.

- Buy for the bottleneck you really have. If it’s latency, go with a smaller model. If it’s quality, go bigger model cache and pay for better evals.

- Log everything. You can’t fix what you can’t see. Centralize prompt, completion, and outcome logs with strict PII policies.

- Avoid platform sprawl. One feature store, one vector layer, one catalog, if you can help it. Sprawl is silent.

| Decision | Good habit | Why |

| KPIs | Outcome-based cost | Aligns engineering and finance |

| Model choice | Fit to task | Prevents overpaying for quality you don’t use |

| Data path | Central governance | Compliance and speed |

| Platform | Fewer, better pieces | Less integration tax |

Conclusion

Overall, AI cloud computing is becoming the core of how businesses work and scale. This shift to the cloud is changing the rules of the game. These statistics clearly show that this market is growing faster than ever due to demand for smarter tools, flexible infrastructure, and generative AI.

While challenges like security and compliance remain, the opportunities are far bigger. In the coming years, AI cloud computing won’t just support businesses; it will drive them forward. So I hope you like this. If you have any questions, kindly let me know in the comment section, please. Thank you.