Market Overview

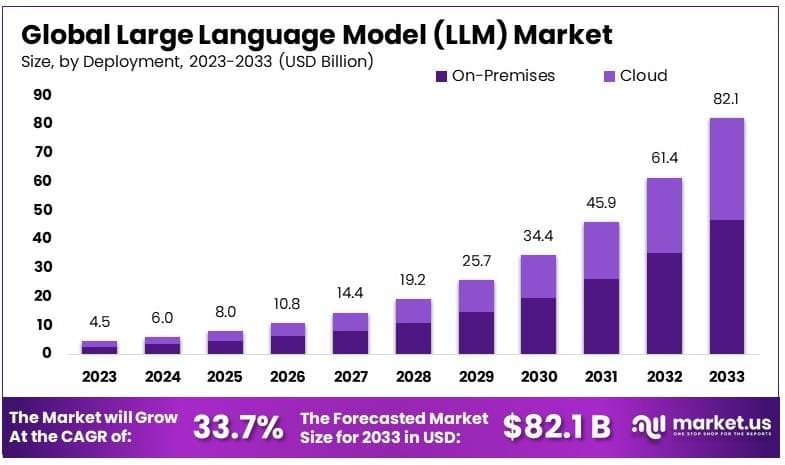

According to Market.us, the global Large Language Model (LLM) market was valued at USD 8.0 billion in 2025 and is projected to reach approximately USD 82.1 billion by 2033, expanding at a compound annual growth rate (CAGR) of 33.7% during the forecast period from 2024 to 2033. This growth reflects a decisive shift in how organizations handle language-based tasks, from customer communication and content generation to code development and business analytics, with LLMs becoming a foundational layer across enterprise technology stacks.

Several structural forces are fueling this expansion. The rapid maturation of transformer-based architectures, continuous improvements in training efficiency, and the falling cost of model inference are making LLMs accessible to a wider range of businesses beyond the largest technology companies. At the same time, the integration of LLMs into cloud platforms from providers such as Microsoft Azure, Google Cloud, and Amazon Web Services has lowered the technical barrier for enterprise adoption, allowing companies to access AI-powered language capabilities through straightforward APIs without building or maintaining the underlying infrastructure.

Gain Access to 2026 Market Intelligence for More Informed Business Strategies – Request a Sample

Key Takeaways

- In 2023, On-Premises deployment accounted for 57.7% share, supported by enterprise focus on data control, compliance, and information security.

- Chatbots and Virtual Assistants represented 27.1% of total application share in 2023, driven by rising adoption of AI-enabled customer engagement tools.

- Retail and E-Commerce led among industry verticals with 27.5% share in 2023, supported by increasing use of AI for personalization, recommendations, and automated support.

- North America held 32.7% market share in 2023, supported by strong research infrastructure, early technology adoption, and large-scale enterprise investments.

Large Language Models Statistics

- Models are generally classified as large when they exceed 1 billion parameters, with training datasets often measured in petabytes to support contextual understanding and content generation at scale.

- In 2023, the top five LLM developers accounted for approximately 88.22% of global market revenue, indicating high market concentration during the early commercialization phase.

- By 2025, an estimated 750 million applications are expected to integrate LLM capabilities across industries.

- Around 50% of digital work processes are projected to be automated by 2025 through applications leveraging language models.

Of the more than 300 million companies operating globally, nearly 67% are reported to be using generative AI products dependent on LLMs for content-related functions. - A survey conducted in August 2023 indicated that 58% of companies are actively experimenting with LLMs, while only 23% have initiated or planned commercial deployment, suggesting continued transition from pilot to production environments.

Engage with Our Expert Team at [email protected] for Data-Driven Solutions

By Deployment Mode

On premise deployment accounted for 57.7% as organizations prioritize control over data, model operations, and compliance processes. Enterprises handling sensitive information prefer localized infrastructure to reduce exposure risks and maintain governance standards. This approach supports regulatory alignment and internal security policies. It also enables organizations to customize AI models based on proprietary data.

Another important factor supporting on premise deployment is system integration. Many enterprises operate established IT environments that require compatibility with internal applications and databases. Maintaining infrastructure internally allows seamless coordination across systems. This ensures stability and supports long term operational control.

By Application

Chatbots and virtual assistants represented 27.1% of application demand due to their ability to automate communication and improve service responsiveness. Organizations are deploying these systems to handle customer queries, provide support, and assist with routine interactions. This reduces response time and improves consistency across communication channels. Automated systems also support scalability during high demand periods.

These applications are also being used internally to enhance productivity. Virtual assistants help employees access information, manage tasks, and streamline workflows. Integration with enterprise systems allows contextual responses and real time assistance. As conversational AI capabilities improve, adoption continues to expand across sectors.

By Industry Vertical

Retail and e commerce accounted for 27.5% as the leading industry vertical due to the need for personalized and efficient customer engagement. LLM based systems are used to power recommendation engines, automate support interactions, and generate product content. These capabilities improve customer experience and support revenue growth. Digital commerce platforms increasingly rely on AI driven communication.

The sector also benefits from high volumes of customer interaction data. Language models analyze this data to identify preferences and behavioral patterns. This enables targeted recommendations and improved marketing strategies. As online retail continues to expand, demand for AI driven solutions remains strong.

Report Scope

| Report Features | Description |

| Market Value (2023) | USD 4.5 Billion |

| Forecast Revenue (2033) | USD 82.1 Billion |

| CAGR (2024-2033) | 33.70% |

| Base Year for Estimation | 2023 |

| Historic Period | 2018-2023 |

| Forecast Period | 2024-2033 |

| Report Coverage | Revenue Forecast, Market Dynamics, Competitive Landscape, Recent Developments |

| Segments Covered | By Deployment (Cloud, On-premise), By Application (Customer Service, Content Generation, Sentiment Analysis, Code Generation, Chatbots and Virtual Assistant, Language Translation), By Industry Vertical (Healthcare, BFSI, Retail and E-commerce, Media and Entertainment, Others) |

| Regional Analysis | North America – US, Canada; Europe – Germany, France, The UK, Spain, Italy, Rest of Europe; Asia Pacific – China, Japan, South Korea, India, Australia, Singapore, Rest of APAC; Latin America – Brazil, Mexico, Rest of Latin America; Middle East & Africa – South Africa, Saudi Arabia, UAE, Rest of MEA |

| Competitive Landscape | Alibaba Group Holding Limited, Baidu, Inc., Google LLC, Huawei Technologies Co., Ltd., Meta Platforms, Inc., Microsoft Corporation, OpenAI LP, Tencent Holdings Limited, IBM Corporation, Amazon Web Services (AWS), NVIDIA, Other Key Players |

| Customization Scope | Customization for segments, region/country-level will be provided. Moreover, additional customization can be done based on the requirements. |

| Purchase Options | We have three licenses to opt for: Single User License, Multi-User License (Up to 5 Users), Corporate Use License (Unlimited User and Printable PDF) |

Regional Analysis

North America led the global LLM market in 2023, accounting for 32.7% of total market share and approximately USD 1.47 billion in revenue, supported by the concentration of leading AI companies, high levels of R&D investment, and a well-developed cloud and data infrastructure. The United States accounts for approximately 88% of North American LLM enterprise deployment revenues, with its technology sector, financial institutions, healthcare providers, and government agencies all actively deploying LLM capabilities at scale.

Strong enterprise technology spending, robust academic research output, and a startup ecosystem built around AI applications collectively reinforce the region’s leadership position. North America’s dominance is also supported by its established cloud providers, which have built the most extensive global AI infrastructure and are continuing to invest heavily in expanding GPU capacity and LLM service availability.

Enterprise technology spending in the U.S. is increasingly allocating a larger share of budget toward AI and automation, with early-mover adoption in sectors like financial services, legal, and healthcare creating documented case studies that accelerate adoption across the broader economy. The Asia-Pacific region, led by China, India, and South Korea, is expected to record the fastest growth rate over the forecast period as government investment, digital infrastructure build-out, and the emergence of powerful local models accelerate regional adoption.

Key Driver

Rising Demand for Intelligent Automation and Cloud Integration

Enterprises across industries are under continuous pressure to do more with the same resources, and LLMs offer a direct path to meaningful productivity gains in language-intensive processes. From drafting legal documents and generating financial reports to coding assistance and customer interaction management, LLMs are removing manual effort from tasks that historically required specialized human time. The clarity and measurability of these productivity benefits are making it easier for organizations to justify investment, and the number of proven LLM deployments across industries is growing rapidly as early adopters publish their results.

Cloud infrastructure has been a critical enabler of this adoption wave. The availability of LLM capabilities through cloud APIs from providers like AWS, Microsoft Azure, and Google Cloud allows organizations to access state-of-the-art models without investing in the hardware required to train or run them locally. AWS and NVIDIA announced a significant expansion of their collaboration at GTC 2026, with AWS committing to deploy more than one million NVIDIA GPUs across its global cloud regions starting in 2026, directly expanding the infrastructure available for LLM training and inference at enterprise scale. This kind of infrastructure investment is accelerating the availability and reducing the cost of cloud-based LLM deployment, lowering the barrier to adoption for organizations of all sizes.

Key Restraint

Data Privacy and Regulatory Compliance Complexity

Despite strong commercial momentum, data privacy obligations and regulatory complexity remain one of the most significant barriers to widespread LLM deployment, particularly in industries that handle sensitive personal, financial, or health-related data. LLMs trained on large datasets can memorize and reproduce fragments of their training data, creating risks related to GDPR’s right to erasure, purpose limitation requirements, and transparency obligations, all of which are difficult to satisfy when the processing logic operates through billions of model parameters.

In 2025 and 2026, regulatory guidance has become more explicit, with the EDPB Opinion 28/2024 confirming that AI models trained on personal data will in most cases be subject to GDPR, and the EU AI Act adding a parallel layer of risk-based requirements for high-risk AI systems. For organizations operating across jurisdictions, managing a patchwork of compliance requirements significantly increases the cost and complexity of LLM deployment. Cross-border data transfers triggered by API calls to third-party LLM providers can constitute regulated processing events under GDPR, Brazil’s LGPD, and U.S. state privacy laws, requiring organizations to implement geo-fencing, regional inference endpoints, and encryption with key locality to remain compliant.

Key Opportunity

Vertical-Specific LLMs and Emerging Market Expansion

One of the most significant near-term opportunities in the LLM market is the development and adoption of domain-specific models fine-tuned for particular industries. General-purpose LLMs are versatile but often lack the precision required for specialized professional tasks in areas such as clinical medicine, legal analysis, insurance underwriting, and financial compliance. Domain-specific LLMs, trained or fine-tuned on industry-relevant data and evaluated against sector-specific benchmarks, are expected to grow at the fastest rate within the enterprise segment from 2026 to 2033, as they provide the accuracy, regulatory alignment, and workflow integration that general models cannot consistently deliver.

Alongside vertical specialization, the expansion of LLM adoption into Asia-Pacific, Latin America, and the Middle East represents a large and still-developing growth frontier. The Asia-Pacific region is projected to record the highest CAGR over the forecast period, driven by rapid digital transformation, government initiatives promoting AI infrastructure, and the emergence of competitive regional models from Alibaba, Baidu, and others that are calibrated for local language and regulatory environments. Alibaba’s Qwen series and Baidu’s ERNIE models are both gaining significant enterprise traction within China, and their expansion into international markets is expected to intensify competition and accelerate adoption across the Asia-Pacific region.

Key Challenge

Model Commoditization and Infrastructure Cost

As more organizations develop, fine-tune, or deploy their own LLMs, the core model layer is becoming increasingly commoditized, with competition driving prices down and narrowing the performance differentiation between leading commercial and open-source options. By mid-2025, OpenAI’s share of the enterprise LLM market had fallen from approximately 50% at the end of 2023 to around 25%, reflecting how quickly competing models from Google, Anthropic, Meta, Alibaba, and Mistral have narrowed the performance gap.

The cost of accessing frontier model capabilities continues to decline, which benefits end users but compresses margins for model developers and raises the question of where sustainable differentiation will come from in the medium term. At the same time, the infrastructure costs associated with training and running large models remain a significant barrier for new entrants and a meaningful ongoing expense for established players. Training leading frontier models costs tens of millions of dollars, and serving them at scale across millions of daily users requires ongoing investment in GPU infrastructure, cooling, and network capacity.

OpenAI’s move to develop its first proprietary AI chip in collaboration with Broadcom, expected to launch in 2026, reflects the pressure to reduce long-term infrastructure costs and reduce dependency on third-party silicon suppliers – a strategy already being pursued by Google, Amazon, and Meta with their own custom chips. Managing these costs while continuing to improve model capability is one of the defining operational challenges for companies competing at the frontier of the LLM market.

Competitive Landscape

The global LLM market is highly concentrated at the frontier level, with the top five vendors together controlling more than 85% of revenue, anchored by integrated technology stacks that span semiconductor hardware to end-user software applications.

This concentration is the result of the enormous capital requirements for training frontier models, the data advantages that accrue to companies with large existing user bases, and the distribution advantages that come from embedding LLM capabilities into widely used products like search engines, productivity software, and cloud platforms.

At the same time, the market is also experiencing growing competition from open-source and open-weight models, regional players, and domain-specific AI companies that are challenging the dominance of the largest players in specific use cases and geographies.

Top Key Players in the Market

- Alibaba Group Holding Limited

- Baidu, Inc.

- Google LLC

- Huawei Technologies Co., Ltd.

- Meta Platforms, Inc.

- Microsoft Corporation

- OpenAI LP

- Tencent Holdings Limited

- IBM Corporation

- Amazon Web Services (AWS)

- NVIDIA

- Other Key Players

Recent Developments

- OpenAI (October 2025) launched GPT-5 Pro at DevDay 2025, offering developers access to its most powerful reasoning model through an API for the first time, alongside AgentKit and ChatKit developer tools for building AI agents and embedding ChatGPT in third-party applications.

- Alibaba (early 2026) released Qwen3.5, a 397 billion-parameter open-weight model with a mixture-of-experts architecture, claiming performance advantages over GPT-5.2 and Gemini 3 Pro on reasoning, coding, and multimodal benchmarks.

- Baidu (January 2026) released ERNIE 5.0 with 2.4 trillion parameters and native multimodal modeling across text, images, audio, and video, reporting performance advantages over Gemini 2.5 Pro and GPT-5 High on more than 40 benchmarks.